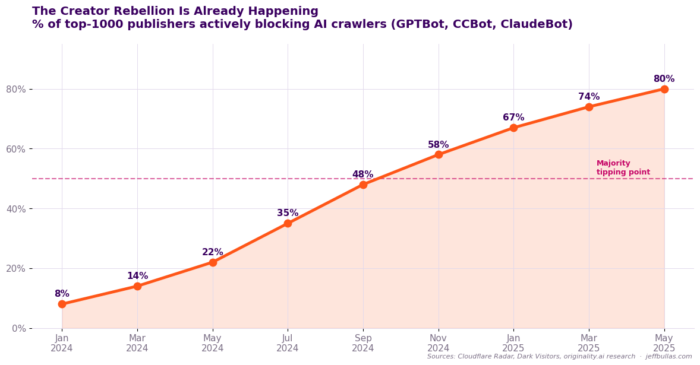

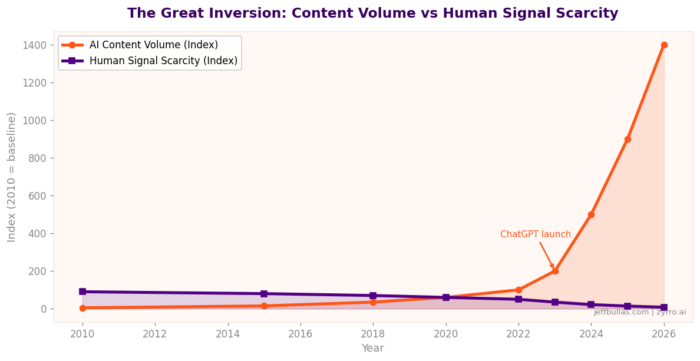

The internet used to reward information. Then it rewarded attention. Now AI has made both cheap.

A single prompt produces a 2,000-word article in thirty seconds. Optimized. Structured. Perfectly forgettable. The content flood is not coming. It has arrived. And the creators who built their entire business on publishing volume are already underwater.

So what actually comes next? Not a philosophical answer. A practical one.

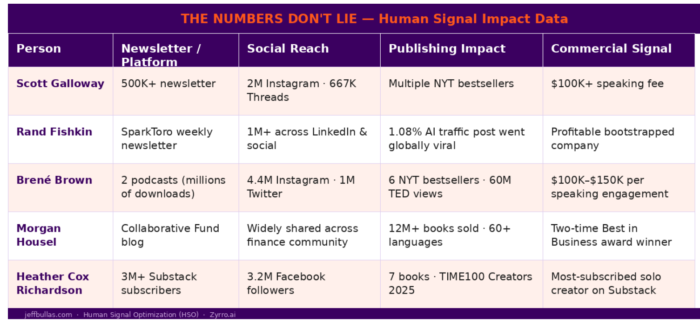

“The next scarce asset is human signal. The proof that a real person with taste, judgment, story, and lived expertise stands behind the work”.

This is important to me as the business model I built my business on over the last 17 years is over and I have been looking for what the future looks like and three creators I have researched here and observed for years prove exactly how that translates into a business that scales.

The Problem With Publishing More

For fifteen years, content marketing ran on one equation. More content equals more traffic. More traffic equals more leads. More leads equals more money.

It worked. For a long time, information was scarce enough that publishing it had inherent value. Then three things broke it.

- Facebook throttled organic reach.

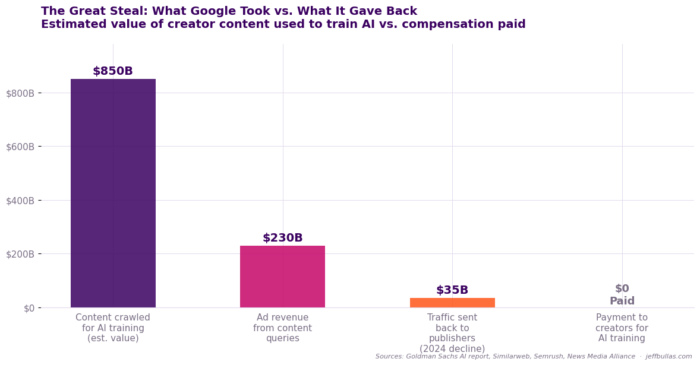

- Google answered questions directly without sending you the traffic.

- Then AI removed the cost of producing the content entirely.

Information is no longer scarce.

The explainer role. The person who synthesises, summarises, and teaches is now contested by a machine that never sleeps, never charges overtime, and never has an off day.

The creators who built their identity entirely around being useful and educating are discovering, painfully, that usefulness alone is no longer a business. Because AI is now your informer and educator.

So the burning question then is “what is” the new business model as the old one is broken.

It requires you to have a human signal in a world of AI machine slop.

What is a Human Signal?

Here is a diagnostic. Ask it about anything you publish.

“Could an AI have written this?”

Not: is it well-written? Not: is it accurate? Could an AI have written this?

If the answer is yes and you cannot point to something specific that makes it irreducibly yours then it is noise. Not signal.

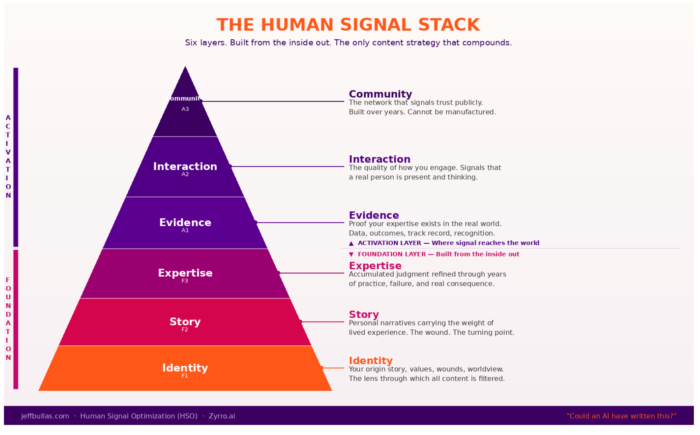

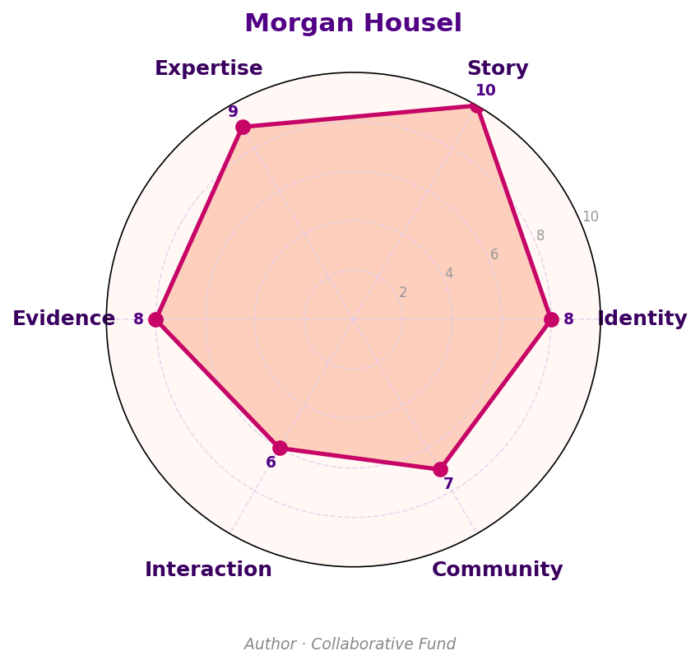

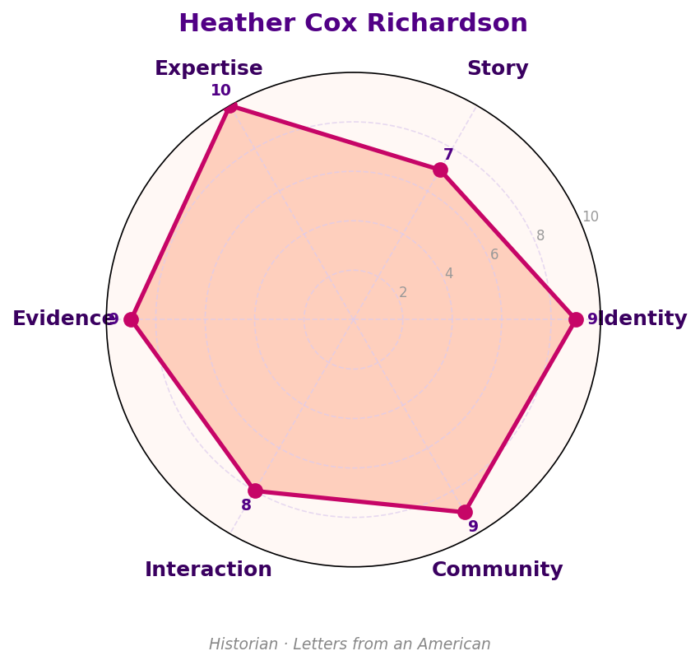

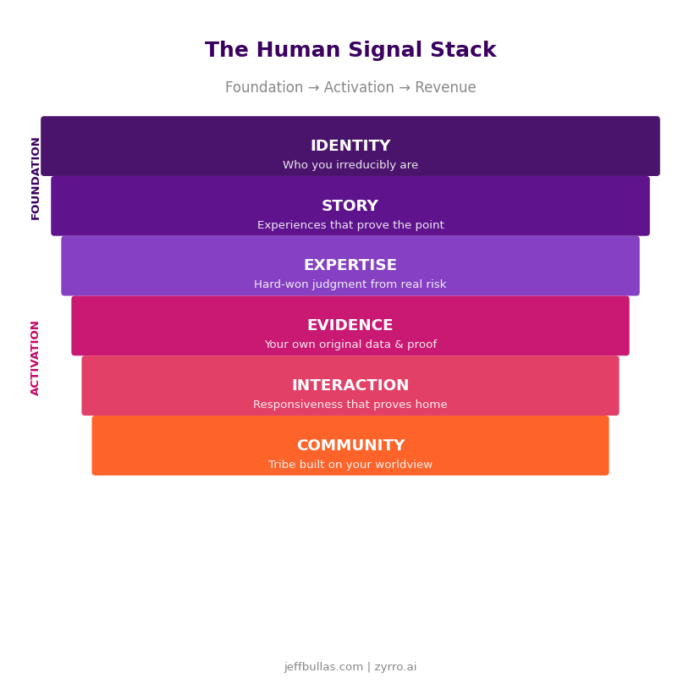

Your “Human Signal Stack” has six layers.

And it starts with knowing your identity. If you don’t know who you are and what you stand for then you are going to be invisible and just blend into the crowd and the noise online.

You will need to have a point of view, have an opinion. To actually make a stand for what you believe in. Knowing what you are angry about will also help.

Here is the “Human Signal” stack that you need to build into everything you do as a creator.

- Identity. Not your job title. The constellation of who you actually are and your obsessions, your origin, your wound, your contradictions.

- Story. The specific experiences that prove your point. Real moments. Not hypotheticals.

- Expertise. Hard-won judgment from having been wrong enough times to know something true.

- Evidence. Your own original research. Your tracked experiments. Your documented failures.

- Interaction. The responsiveness that proves someone is home. Real replies. Real disagreements engaged.

- Community. The tribe that forms around your particular way of seeing — not just your topic.

The foundation layers: Discovering and building your Identity, story and expertise are slow to build but permanent once established.

The activation layers: These are evidence, interaction, community compound over time.

A creator who has all six is almost impossible to replicate. A creator who has none is indistinguishable from the machine. Now. The real question. How does this become a business?

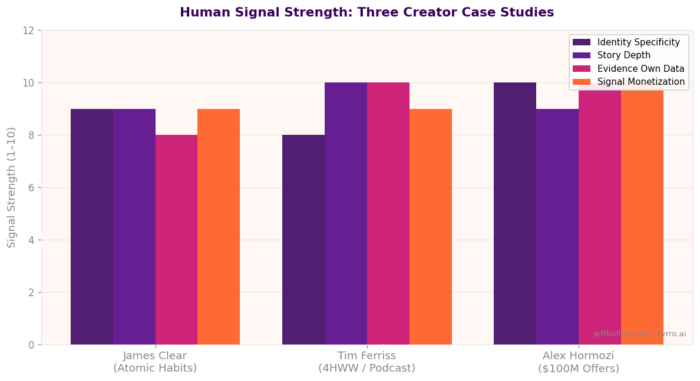

Here are 3 people worth checking out. Look at their websites, read their writing, listen to their podcasts. Buy their books. I have read all their books and Tim Ferriss’s book “The 4 Hour Work Week” I have maybe read 3 times.

Case Study 1: James Clear

James Clear is not a productivity expert.

There are thousands of productivity experts. Most of them are interchangeable. Most of them would fail the diagnostic question entirely.

Clear is something different. He is a man who fractured his skull in a high school baseball accident, spent months recovering, and used that experience to build a precise, personal understanding of how small habits compound over time. He did not read about resilience. He lived through a medical crisis and came out the other side with a specific theory about human behaviour, tested on himself first.

He launched a newsletter in 2012 before he had a book deal, a publisher, or a platform. What he had was identity. Story. And the discipline to write one idea, clearly, every week for years.

He did not publish more than anyone else. He published more consistently than almost anyone else, with more specificity and more personal authority behind every claim.

The “Atomic Habits” book sold over fifteen million copies. Not because it contained information nobody else had. But because the voice behind it was undeniably specific. You felt, reading it, that a real person had tested these ideas and paid something to arrive at them.

The business that grew around it — the courses, the speaking, the premium content — was not built on traffic volume. It was built on a reputation that could not be replicated because it was tied to a specific identity with a specific origin story.

The lesson: a narrow, deeply human point of view, published consistently over years, creates an audience that pays for access to the mind — not just the information it produces.

Case Study 2: Tim Ferriss

Tim Ferriss was not a business expert when he wrote The 4-Hour Workweek.

He was a supplement company founder who had worked himself into a breakdown, then spent a year conducting experiments on his own life to find a way out. The book was not research. It was a documented escape. Every claim traced back to something he personally tested on his own body, his own business, his own psychology.

That was the signal.

Not the ideas. Not the productivity frameworks. Plenty of people had written about outsourcing and lifestyle design before Ferriss. What nobody else had was the specific, verifiable, sometimes embarrassing account of one man running himself as a laboratory.

He extended that logic to his podcast. The Tim Ferriss Show does not position itself as an interview program. It positions itself as a place where one specific human being with documented obsessions, documented failures, and documented methods has access to world-class minds and is curious enough to extract what nobody else asks for. The signal is not the guest list. The signal is the host’s particular way of seeing.

Tim’s podcast has had over 700 million downloads.

The business that surrounds it — book deals, investments, brand partnerships — derives its value from the same source. Ferriss is not a media company. He is a specific identity that has earned the right, through documented experimentation and public vulnerability, to be trusted as a guide.

The lesson: publishing yourself as the evidence not just the author creates a signal that compounds. Every new experiment, every documented failure, every honest account of what worked adds another layer of proof. AI can produce advice. It cannot produce receipts.

Case Study 3: Alex Hormozi

Alex Hormozi built a gym. Then a gym licensing business. Then he watched it nearly collapse. Then rebuilt it. Then he sold it. Then did it again, at larger scale, across multiple industries.

He did not start creating content because he wanted to be a creator.

He started because he had accumulated, through genuine trial and failure and recovery, a body of business knowledge that was so specific and so tested that he could not stop himself from publishing it. The signal was overwhelming. You could feel, watching his early videos, that this was a man who had been somewhere most business content creators had never been, the specific, unglamorous reality of running a failing business and refusing to quit.

He did not optimize for production quality. He optimized for specificity.

The numbers he cited were his own. The failures he described were documented. The methods he taught were the ones he had personally used to move from near bankruptcy to building a portfolio valued at over $100 million.

The book “$100M Offers” became one of the most widely read business books of recent years not because it contained sophisticated theory but because it contained brutal, operational specificity that only someone who had built and sold multiple businesses could produce. You cannot fake that level of detail. The detail itself is the proof.

The content he publishes is free. Deliberately. The business model is not content monetization. It is signal monetization. The content establishes an identity so credible, so specific, and so clearly backed by evidence that the offers which flow from it — equity investments, advisory relationships, acquisition targets — attract at premium prices.

The lesson: radical specificity about your own failures and wins creates a signal that advertising budgets cannot replicate. Hormozi does not spend on paid acquisition. He does not need to. The signal does the work.

The Business Model Behind the Signal

Three different people. Three different industries. Three very different personalities.

Same underlying architecture.

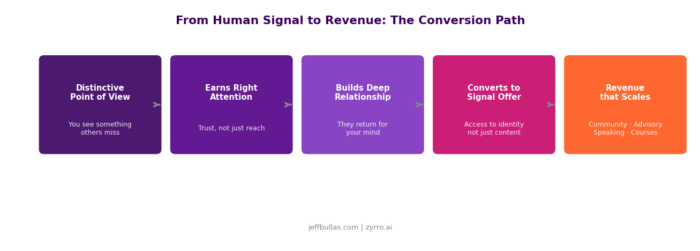

The path from human signal to revenue runs through a specific sequence.

A distinctive point of view earns attention. Not mass attention. The right attention. People who encounter the work and think: this person sees something I don’t. That is different from viral. Viral is cheap. Trust is expensive.

That attention compounds into relationship. Regular readers who come back not because you publish on a given topic but because they want to see what your particular mind does with it.

Relationship converts to transaction. Not through aggressive funnels. Through offers that feel like natural extensions of the signal itself.

- Clear’s readers buy his course because they want more of his thinking.

- Ferriss’s listeners pay for his book and event access because they trust the judgment behind it.

- Hormozi’s clients pay premium prices because the signal pre-qualifies the relationship.

None of them are selling information.

All of them are selling access to an identity.

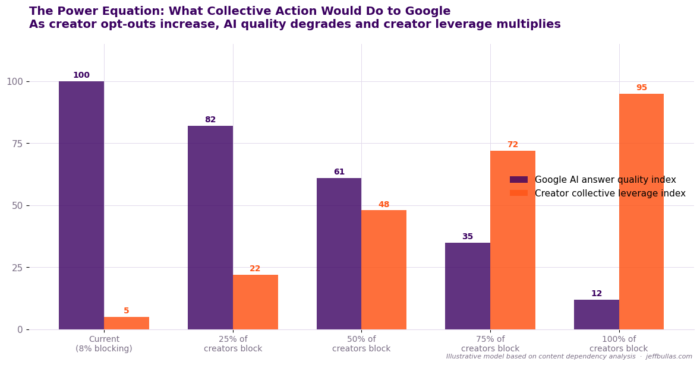

That is the human signal economy. And here is the economic reality that makes it durable: AI makes information infinitely cheap but it makes credible, proven human identity increasingly scarce.

The market price of the un-automatable is rising. Not as a cultural preference. As a structural market force.

The Villain Is Not AI

It would be comfortable to make AI the villain here.

It is not.

The villain is the system that trained creators to optimize for machines rather than for humans. The SEO machine that rewarded keyword density over insight. The social media algorithm that punished nuance and amplified outrage. The content marketing industrial complex that turned genuine human curiosity into production quotas.

AI did not create the problem. It exposed it.

It took the logic of machine-optimization to its logical endpoint and showed us where that road terminates: a world of infinite content with zero signal.

The creators who suffer most in the AI era are not the ones who used AI. They are the ones who had already become like AI and producing content that could have been written by anyone, for anyone, about anything, with no particular skin in the game.

If your content sounds like it was generated, the problem is not the tool. It is the absence of you.

What You Actually Do Next

Three practical moves. Not philosophical. Not aspirational. Executable this week.

First: run the diagnostic on your last ten pieces of content. Could an AI have written each one? Be honest. Mark the ones where the answer is yes. Those are your exposure. The places where you have been competing with an infinitely scalable machine.

Second: identify the one story from your own life that most directly proves your central argument. The specific moment. The specific cost. The specific insight it produced. Write it. Not as a personal essay. As the opening of your next piece of professional content. See what happens.

Third: stop optimizing for reach. Start optimizing for recognition. Reach measures how many people saw something. Recognition measures how many people thought: that could only have come from that person. One of those metrics builds a durable business. The other builds a treadmill.

James Clear spent eight years writing before Atomic Habits became a cultural phenomenon.

Tim Ferriss filmed himself relentlessly before the audience became a business.

Hormozi posted for years at zero production value before the signal broke through.

None of them found a shortcut. All of them found something more valuable: an identity specific enough to be irreplaceable.

The future does not belong to creators who publish more.

It belongs to creators who become harder to fake.

In the AI age, the most valuable content will not be the content that sounds the smartest. It will be the content that proves someone real is home.

The post How Creators Make Money When AI Makes Content Free appeared first on jeffbullas.com.

* This article was originally published here